Tools: Evolving Descriptive Text Of Mental Content From Human Brain Activity

The crackle of electricity inside your brain has long been too complex to decode. Artificial intelligence is changing that.

The woman didn't move, apart from the rise and fall of her breathing – eyes fixed in concentration, hand clenched in a fist. Words were forming on a screen in front of her, slowly piecing together into whole sentences. Sentences she couldn't say out loud.

The 52-year-old woman had been paralysed by a stroke 19 years earlier, leaving her unable to speak clearly. Here, however, her internal monologue was appearing before her eyes.

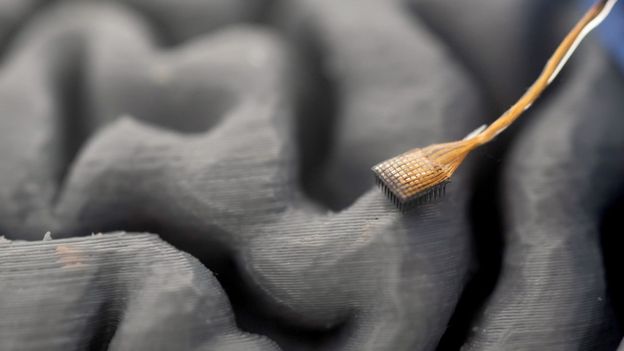

The women, identified only as participant T16, had been fitted with a tiny array of electrodes that was surgically inserted into a lobe at the front of her brain. Now a computer, powered by a form of artificial intelligence, was decoding the signals produced by her neurons as she imagined saying words, with the system translating them into text on a screen. She was taking part in a study at Stanford University in California, US, alongside three patients with the neurodegenerative disease amyotrophic lateral sclerosis (ALS), to test a technique capable of translating thoughts into real time text.

It was the closest scientists had come yet to a form of "mind reading".

The researchers unveiled their success in August 2025. A few months later, researchers in Japan revealed a "mind captioning" technique capable of generating detailed, accurate descriptions of what a person is seeing or picturing in their mind. It combined three different AI tools with non-invasive brain scans to translate a person's brain activity.

Both studies are the latest in a string of breakthroughs that are giving neuroscientists a new window into the inner workings of the human brain and providing opportunities to help people who are unable to communicate in other ways. Eventually, however, it could radically transform the way we all interact with the world around us and even with each other.

"In the next few years, we will begin to see these technologies being commercialised and deployed at scale," says Maitreyee Wairagkar, a neuroengineer who has been developing brain-computer interfaces at the neuroprosthetics laboratory at University of California, Davis, in the US. Several companies including Elon Musk's Neuralink are already seeking to produce commercial brain chips that will bring this technology out of the lab and into the real world. "It's very exciting," says Wairagkar.

Scientists have been working on devices capable of com

Source: HackerNews