Tools: From Hackathon Hustle To Gemini Glory: My Syncroflow Journey

It all started with the adrenaline-fueled chaos of the Ant Media Hackathon. The air buzzed with caffeine, code, and the collective ambition of developers eager to build something impactful. My goal? To create a visual logic engine that could transform complex AI video pipelines into intuitive, drag-and-drop nodes. This vision became SyncroFlow, and at its heart, powering the intelligence, was Google Gemini.

_**SyncroFlow Demo Video**_ _https://www.youtube.com/watch?v=z0_sX_81Mok_ [](https://www.youtube.com/watch?v=z0_sX_81Mok)

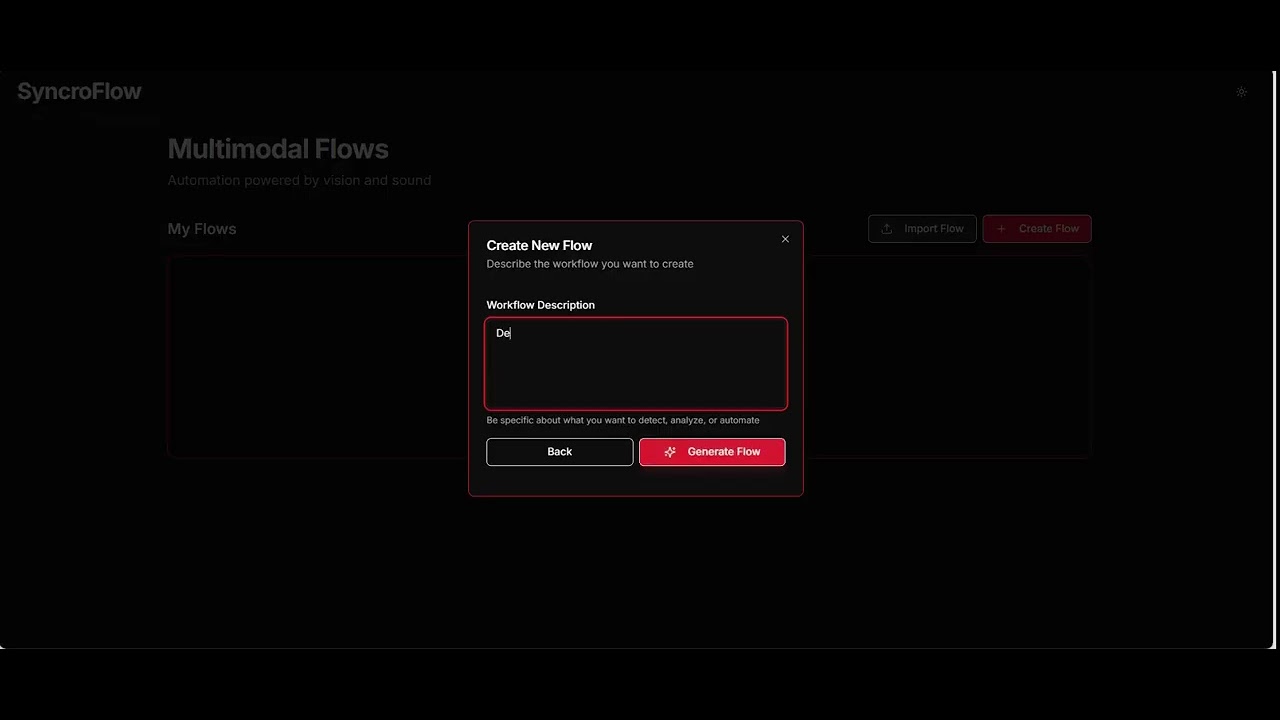

A visual logic engine for real-time video intelligence that transforms complex AI video pipelines into simple, drag-and-drop nodes using ultra-low latency WebRTC streaming.

SyncroFlow enables users to build custom computer vision and AI monitoring tools in seconds without requiring weeks of development. It combines drag-and-drop visual programming with AI-powered video analysis capabilities.

Frontend: React 18, Vite, React Flow, Tailwind CSS Backend API: Node.js / Express AI/Vision Backend: Python, FastAPI, YOLOv8 Video Infrastructure: Ant Media Server, WebRTC, RTMP AI…

What's even more remarkable is that the very spark for SyncroFlow was ignited by Google Gemini itself. It wasn't just a tool I used to build; it was the partner that helped me think of the solution in the first place. This "full circle" moment, where Gemini helped me identify the problem and then provided the means to solve it, was a powerful testament to its potential as a creative and analytical partner.

SyncroFlow is a real-time video intelligence platform designed to empower users to build custom computer vision and AI monitoring tools in seconds, without needing weeks of development. Imagine a drag-and-drop interface where you can connect nodes to detect objects, analyze human poses, and even transcribe audio, all streaming with ultra-low latency via WebRTC.

Google Gemini, accessed through the OpenRouter API, was the brain behind SyncroFlow's analytical capabilities. Specifically, I leveraged google/gemini-2.5-flash for both visual and audio analysis. This allowed SyncroFlow to:

This integration was crucial. It meant that SyncroFlow wasn't just about detecting things... it was about understanding and interpreting them, bringing a new layer of intelligence to real-time video streams.

What's even more remarkable is that a significant portion of SyncroFlow's codebase: from the front

Source: Dev.to